Table of Contents

The Fight for AI Infrastructure Is a Hardware Fight

Every conversation about AI in 2026 eventually arrives at the same constraint: compute. Models are getting more capable. Agent systems are being deployed at scale. Inference demand is growing faster than anyone forecast 18 months ago. And the companies that control the hardware that runs all of this hold a form of leverage that is unprecedented in the history of the technology industry.

Nvidia remains the dominant player. Its market capitalization stands at $4.5 trillion. Its Blackwell architecture, which began shipping in 2025, is already being superseded by the upcoming Rubin platform. AMD is competing harder than at any point in the past decade, deploying the Helios rack architecture on open standards that hyperscalers find attractive. Broadcom is designing custom chips for the world’s largest AI companies. And a new generation of startups, led by Cognichip, is trying to use AI to change how chips are designed entirely.

Nvidia Vera Rubin: Understanding What 3.6 ExaFLOPS Really Means

Nvidia’s Vera Rubin platform will replace the Blackwell architecture in the second half of 2026. It delivers 3.6 ExaFLOPs of dense FP4 compute, making it 3.3 times more powerful than Blackwell. Furthermore, the platform cuts token costs by 10× compared to Grace Blackwell. Consequently, running AI inference workloads on Rubin hardware will cost roughly one-tenth of what it did on the previous generation.

Token cost reduction is not a marketing metric. It is the number that determines whether a business model is viable. At current AI inference costs, many agentic applications are economically marginal: the compute cost of running an AI agent continuously across a working day is substantial enough that only high-value use cases can absorb it. At one-tenth the cost, the economic threshold for viable AI agent deployment drops dramatically, opening up categories of applications that are currently impractical.

The Rubin Ultra, arriving in 2027, will offer 15 ExaFLOPS of FP4 inference compute through its NVL576 configuration. And Nvidia’s Feynman architecture, already announced for 2028, keeps the cadence extending well beyond the current competitive horizon.

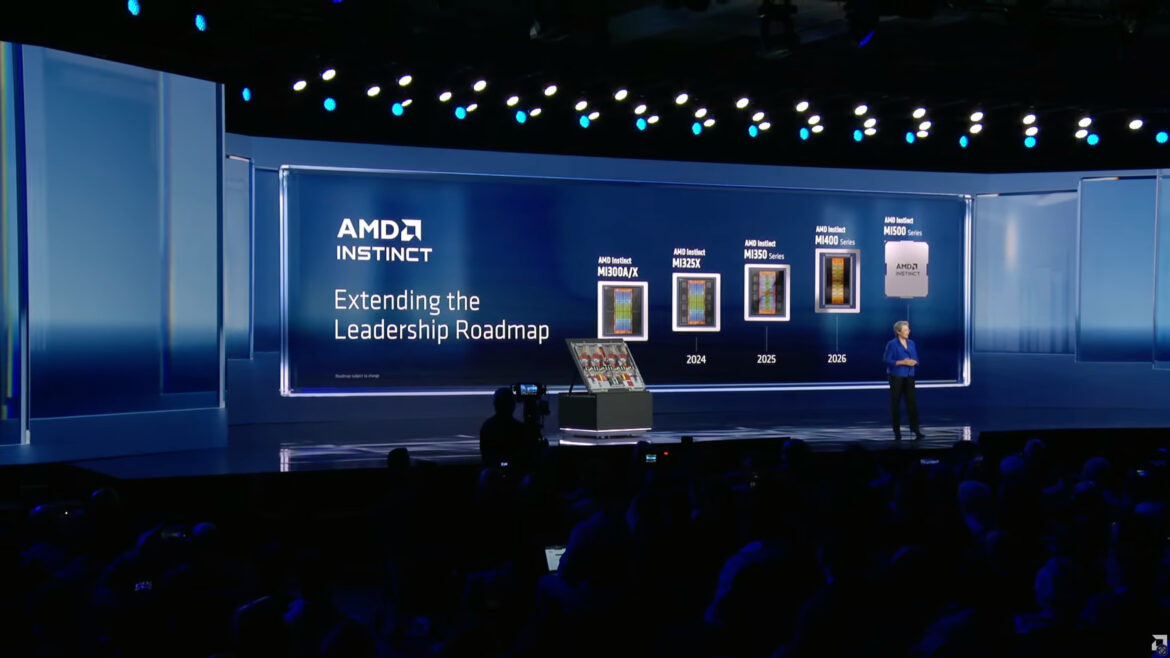

AMD Helios: The Open Standard Challenger

AMD’s most significant competitive move in 2026 is not a chip announcement. It is an architecture philosophy. The Helios rack system, scheduled for Q3 2026 deployment, uses the Open Rack Wide standard that Meta co-developed. This open standard allows hyperscalers to avoid vendor lock-in to Nvidia’s proprietary NVLink interconnect ecosystem.

Helios racks hold 72 of AMD’s MI450 Series GPUs, matching Nvidia’s NVL72 configuration in unit count. Oracle has already committed to deploying 50,000 of these chips. OpenAI is listed as a key early customer. AMD CEO Lisa Su has described the Helios as the world’s best AI rack, a direct challenge to Nvidia’s rack-scale dominance.

The MI500 series, set for 2027 on a 2-nanometer process with HBM4E memory, will deliver a 1,000x increase in AI performance over AMD’s MI300X GPUs released just two years ago. AMD’s stock has risen 78% over the past 12 months, reflecting investor confidence that the company’s open-standard strategy is genuinely attracting the hyperscaler contracts that will define data center purchasing for the next decade.

Broadcom: The Custom Chip Play Worth $100 Billion

Broadcom occupies a distinctive position in the AI hardware market. Rather than competing with Nvidia and AMD on general-purpose GPU performance, Broadcom designs custom AI chips for specific customers. Its best-known work is Google’s Tensor Processing Unit, which it has co-designed through all seven generations. Anthropic has placed orders totaling $21 billion for Broadcom-designed TPU-based compute. A rumored 10-gigawatt deal with OpenAI, if confirmed, would cement Broadcom as the dominant custom silicon provider for the AI industry.

Broadcom’s AI semiconductor revenue reached $12.2 billion in fiscal 2024 and is expected to roughly double in fiscal 2026. The company is projecting $100 billion in AI chip revenue by the end of 2027. These are not speculative projections. They are based on signed contracts with the largest AI companies in the world.

The custom chip advantage is real for large-scale AI deployments. A chip designed specifically for one company’s model architecture and training workflow will outperform a general-purpose GPU at that specific task, often by a significant margin. The tradeoff comes down to cost and flexibility: designing custom chips is expensive, and companies cannot repurpose them as easily as general-purpose hardware. For companies running AI at the scale of Google, Anthropic, and OpenAI, the economics favor custom silicon.

Cognichip: Using AI to Design AI Chips

Cognichip raised $60 million this week to apply AI directly to the chip design process. The company claims it can reduce chip development costs by more than 75% and cut development timelines in half. If those claims hold at scale, Cognichip is building a platform technology for the semiconductor industry rather than a single chip company.

Traditional chip design is one of the most expensive and time-consuming engineering processes in existence. A standard custom chip development cycle runs 18 to 36 months and requires teams of hundreds of highly specialized engineers working through iterative design, simulation, physical implementation, and verification stages. Automating significant portions of this process with AI compresses the timeline, reduces the headcount required, and makes chip design accessible to companies that cannot currently justify the investment.

The implications extend beyond cost savings. If chip design becomes faster and cheaper, the global production of specialized chips could rise dramatically. As a result, more industries could adopt domain-specific hardware for applications like robotics, medical devices, and autonomous vehicles, which currently rely on adapted general-purpose silicon.

The AI PC: Intel, AMD, and Qualcomm’s Consumer Battle

At CES 2026 in January, Intel, AMD, and Qualcomm each unveiled new AI PC chip platforms. Intel launched its Core Ultra Series 3 processors, featuring an upgraded neural processing unit designed for local AI inference. AMD unveiled the Ryzen AI 400 series, the first Copilot-plus desktop CPU the company has produced. Qualcomm brought its Snapdragon X2 Elite, continuing its push to establish ARM-based architecture as the standard for high-performance, low-power AI PCs.

The AI PC category is defined by on-device neural processing capability, the ability to run AI models locally without sending data to the cloud. For consumers, this means faster AI responses, better privacy, and AI features that work without an internet connection. For the chip companies, it means a new performance metric and a new product category with pricing power.

Apple’s M5 Max, which beat a 96-core AMD Threadripper Pro in Geekbench testing, demonstrates the performance advantage that tightly integrated silicon-software co-design can achieve. Apple’s approach, designing both the chips and the operating system, continues to set the benchmark that Intel, AMD, and Qualcomm are racing to match.

Export Controls and the Geopolitics of Chips

The U.S. government’s ongoing management of AI chip export controls has created a bifurcated global market. Nvidia has been working to resume sales to China following a 25% tariff agreement with the Trump administration. Policymakers have proposed a worldwide framework that would require government officials in host countries to certify large chip deployments of 200,000 GPUs or more. Although they have not yet fully implemented the framework, it signals a broader shift toward treating AI hardware as a geopolitical instrument.

Chinese chip firms have responded to export restrictions by capturing almost 50% of the domestic AI accelerator market. Companies including Huawei and Biren are shipping chips optimized for inference workloads under restricted conditions. DeepSeek V4, expected in Q2 2026, will run on Huawei chips, showing that developers can build and deploy frontier-class AI models entirely on non-NVIDIA and non-AMD hardware.

The Infrastructure Numbers Behind the Hardware Race

The scale of the hardware investment underway is difficult to fully grasp without the numbers. OpenAI’s $122 billion fundraise is partly intended to fund 10 gigawatts of custom AI accelerator development in partnership with Broadcom. Microsoft committed $10 billion to Japan for AI infrastructure. Google is building a data center in Texas alongside a natural gas plant that will emit an estimated 4.5 million tons of greenhouse gases per year. Meanwhile, analysts project that the global AI hardware market, currently valued at around $67 billion, will grow to $1.3 trillion by 2032.

These numbers are not projections about a distant future. They are capital commitments that are being executed right now, in the form of construction contracts, chip orders, and semiconductor foundry reservations. The AI hardware race of 2026 is the physical infrastructure layer that will determine who runs the world’s AI workloads for the next decade.